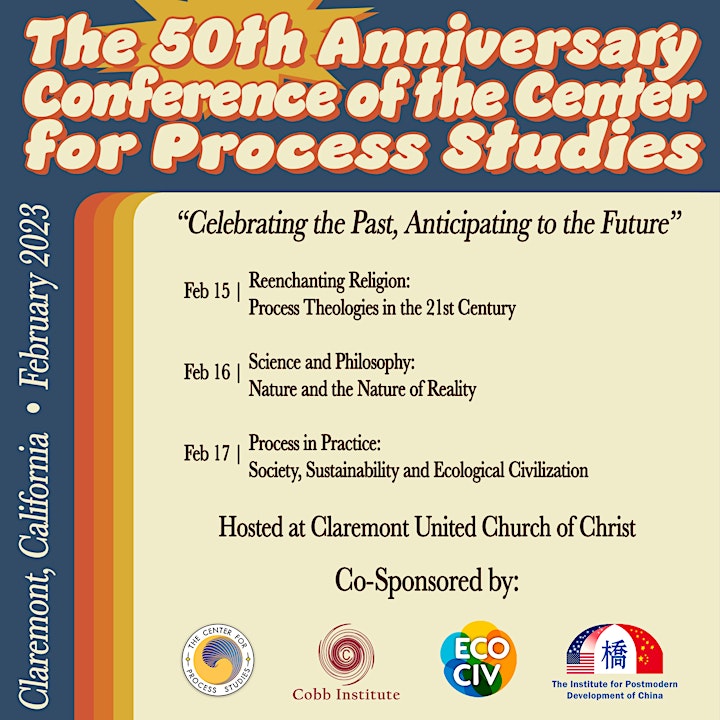

50th Anniversary Conference of

the Center for Process Studies

A 3-day celebration of the 50-year legacy of CPS and its creative transformation in the context of a new generation of process thinkers

Date and time

Wed, Feb 15, 2023, 9:00 AM – Fri, Feb 17, 2023, 6:00 PM PST

Location

Claremont United Church of Christ

233 Harrison Avenue

Claremont, CA 91711

CONFERENCE VISION AND RATIONALE

Founded in 1973 by John B. Cobb Jr. and David Ray Griffin, The Center for Process Studies (CPS) is coming upon its 50-year anniversary as a faculty research center of Claremont School of Theology. Under the leadership of Cobb and Griffin, CPS earned a global reputation as the southern California hub for the study of process philosophy and theology. Through rigorous research, cutting-edge conferences, and academic publications, Cobb and Griffin enacted the mission of CPS to facilitate the development, relevance and application of Whitehead’s philosophy across disciplines. The continued leadership by subsequent co-directors Marjorie Suchocki (1990), Philip Clayton (2003), Roland Faber (2006), Monica Coleman (2008) and others, built upon the foundations of Cobb and Griffin and continued to lead CPS into new generations of research and relevance. Now led by executive director Wm. Andrew Schwartz (2013) and program director Andrew M. Davis (2020), CPS enters its 50th year indebted to the contributions of the past, but looking toward the novelty of the future.

Co-sponsored by The Cobb Institute, The Institute for Ecological Civilization, and the Institute for the Postmodern Development of China, the “50th Anniversary Conference of the Center for Process Studies” celebrates the 50-year legacy of CPS and its creative transformation in the context of a new generation of process thinkers. Three core layers of investigation, each with four respective sessions (A-D), are proposed in light of this purpose:

1. Reenchanting Religion: Process Theology in the 21st Century (Feb 15th)

- A. Divinity Reimagined: What is novel in the explication and/or reception of a process doctrine (or model) of God over and against its alternatives? Where are challenges and opportunities arising in current debates and conversations?

- B. Religious Experience and Religious Belonging: What forms of religious experience, intuition and belonging are substantiated and/or produced from within process theology as creatively expressed across ecclesial settings? What does process theology/spirituality look like when lived out communally?

- C. Panpsychism and Religious Naturalism: In what ways are process conceptions of panpsychism (or panexperientialism) relevant to the re-formulation of central Christian doctrines e.g., creation, Christology and divine action? What is the relationship between panpsychism and affirmations of religious naturalism?

- D. Beyond Dialogue and Deep Religious Pluralism: Where are new connections being made between process theology and different religious systems and thinkers? In what ways is process theology informing new discussions of religious pluralism and multiplicity?

2. Science and Philosophy: Nature and the Nature of Reality (Feb 16th)

- A. Facts, Values and Possibilities: How does process thought understand the relationship between facts, values and possibilities as variously expressed from physics to metaphysics? In what ways does process philosophy offer an interpretive metaphysics for the findings of physics?

- B. New Materialism, Poststructuralism, and Process Philosophy: Where do points of connection lie between process philosophy, new materialism and poststructuralism? What new voices/insights are being integrated and what interdisciplinary insights are emerging?

- C. Brains, Souls and Self: What new contributions is process thought making to related concerns and questions in neurology, psychology and psychiatry? Where have advances been made with respect to the brain, the mind-body problem, personal identity and the self, and personality growth and transformation?

- D. Art, Beauty and Creativity: In what ways does process philosophy inform aesthetic experience, theory, and expression? Where are new developments and connections being made across artistic disciplines?

3. Process in Practice: Society, Sustainability and Ecological Civilization (Feb 17th)

- A. Economies and Communities for the Common Good: In what ways is process thought informing and/or critiquing economic theories, policies and measures? What practical revisions are being proposed and where? To what end are these revisions sought and for which communities?

- B. Power, Peace and Politics: What resources does process thought sustain in attempts to remedy deepening bifurcations across politics, society, and culture? What extremes does process thought seek to avoid in political theory, governance, and power? How is peace possible given the dysfunctional entanglement of power and politics?

- C. Process Philosophy and Education: How is process philosophy informing and/or transforming educational theory and practice? Where do theoretical and practical weaknesses lie from grade-school to university settings and beyond? How is process philosophy responding to the crises in education?

- D. Environmental Ethics and Ecological Civilization: What theoretical and practical ethical measures does process thought support and/or justify in light of the ecological crisis and the quest for an ecological civilization? What requires transformation in our relation to the environment and its human and non-human “others”?

It remains a truly exciting time for the global process movement with a new generation of scholars leading the way. This conference aims not only to celebrate the 50-year legacy of CPS, but also anticipate its continued relevance and creative transformation in the decades still ahead.

|

| Alfred North Whitehead |

About The Center for Process Studies

Our Mission

Driven by the principle of relationality and commitment to the common good, the Center for Process Studies (CPS) works on cutting edge discourse across disciplines to promote the exploration of interconnection, change, and intrinsic value as core features of our world.

As a faculty-based research center at Claremont School of Theology (CST), CPS conducts research and develops educational resources that explore the implications of these principles on a range of topics (e.g. science, ecology, culture, philosophy, religion, education, psychology, political theory, etc.) in a unique transdisciplinary style that harmonizes fragmented disciplinary thinking in order to develop integrated and holistic modes of understanding.

The CPS mission is carried out through academic conferences, courses, and seminars, a robust visiting scholars program, the world’s largest library related to process-relational writings, and an array of publications (including a peer-reviewed journal and a number of active books series).

Our History

The Center for Process Studies (CPS) is a faculty-based research center at Claremont School of Theology, in affiliation with Claremont Graduate University. The Center conducts research, publishes scholarly and popular works, organizes courses and conferences, and seeks to promote the common good for our common world by means of the relational approach found in process thought.

Influenced by the work of Alfred North Whitehead and John B. Cobb, Jr., CPS is dedicated to the expansive exploration of ever-new sectors of academia and society through the transformative and ever-transforming tradition of process thinking. Process thinkers engage many different religious traditions and non-religious worldviews, working to both create positive social change and protect the natural environment. Among the Center’s publications are: Process Studies, the leading refereed journal in the field; Process Perspectives, a popular newsmagazine; and multiple series of books and publications stemming from its various projects.

Founded in 1973 by John B. Cobb, Jr. and David Ray Griffin as a faculty center of Claremont School of Theology in affiliation with Claremont Graduate University, the Center for Process Studies was established to facilitate the development and application of a relational worldview. Marjorie Hewitt Suchocki became a co-director in 1990. Philip Clayton became a co-director in fall 2003. Roland Faber became a co-director in January 2006, and Monica A. Coleman became a co-director in fall 2008. In fall 2013, Wm Andrew Schwartz (a PhD student at the time) was appointed as Managing Director of CPS. In this capacity, he played a central role in organizing the 2000-person "Seizing Alternative" conference in June 2015. Upon completion of his PhD in fall 2016 Schwartz was appointed as Executive Director of CPS. As of summer of 2020, CPS is affiliated with Willamette University and located in Salem, OR.

Through seminars, conferences, publications, a library/archive, and visiting scholars program, the Center for Process Studies encourages research on the form of process thought which received its primary impetus from philosophers Alfred North Whitehead (1861-1947) and Charles Hartshorne (1897-2000).

CPS contributes to the development of a new cultural paradigm of systematic metaphysics influenced by a relational worldview. As a resource for scholars and professionals, CPS coordinates multidisciplinary and trans-disciplinary research on pressing issues while seeking to avoid the inertia and limitations of segregated university disciplines. Where other new paradigm institutes focus on singular issues—like ecology, agriculture, feminism, race and class, decentralized political economic theory, or appropriate technology—a typical process focus is to integrate these issues through a non-dualistic worldview applicable to a wide range of issues.

Deeply appreciative of the natural sciences, process philosophy uniquely integrates science, religion, ethics, and aesthetics. It portrays the cosmos as an organic whole analyzable into internally related processes. In this way process thought offers a postmodern alternative to the mechanistic model that still influences much scientific work and is presupposed in much humanistic literature. The relational process perspective deals with multicultural, feminist, ecological, inter-religious, political, philosophical, and economic issues. Process thought provides a basis for discussion between Eastern and Western religious and cultural traditions. It offers an agenda on the social, political, and economic order that brings issues of human justice together with a concern for ecology. In these and other ways, process thinkers hope to contribute to those movements that will carry the world beyond war, injustice, and despair.